Human history can be reduced to moments of discovery – and leaps in medical technology are among the most significant

Human history can be reduced to moments of discovery – and leaps in medical technology are among the most significant

Medicine is undergoing similar revolutions today but the full impact of many of these advances remains to be seen. This is our selection of the most transformational technologies shaping medicine today.

1. Sharpening the genetic scissors

In the past, gene therapy has used relatively crude mechanisms, with new strands of DNA being injected into human cells using harmless viruses as vectors. This strategy has worked for some diseases that are caused by a missing gene, but not for those requiring a genetic sequence to be snipped out or replaced. The discovery in 2007 of CRISPR, a nifty pair of genetic scissors, has opened up a new era of genetic engineering by giving scientists the ability to edit human DNA. Potentially, this powerful new technology could allow abnormal genes, such as those for cystic fibrosis, to be deleted.

CRISPR (clustered regularly interspaced short palindromic repeats)/Cas9 was first recognised as a mechanism used by bacteria to fight viruses. These viruses, called bacteriophages, replicate by inserting DNA into bacteria. The bacteria fight back by deploying CRISPR/Cas9, which chops up and degrades the invading DNA.

Cas9 (CRISPR associated protein) is the enzyme that actually makes the cut. CRISPR is a strand of RNA that identifies the region on the DNA and moves the Cas9 clippers to the correct location.

By modifying the CRISPR system and RNA sequence, it should be possible for scientists to edit any section of the human genome.

So far CRISPR has been successful in treating disease in experimental animals. A gene mutation that causes muscular dystrophy has been corrected in mice, and scientists have disrupted a Huntington’s disease gene.

In March, a paper published in Nature announced that CRISPR/Cas9 significantly diminished HIV-1 replication in infected primary CD4+ T-cell cultures, meaning that CRISPR could be used to cure AIDS in the future.

In the past 12 months, two research groups in China also reported using CRISPR to edit human embryos. One group attempted to make the embryo resistant to HIV infection while the other targeted a gene that causes the blood disorder beta-thalassaemia.

While the medical applications are tantalising, before CRISPR can be used safely in humans researchers must ensure the Cas9 enzyme does not make unintended cuts, which could have unintended consequences, such as inducing cancer.

2. Doctor in your pocket

A wave of technology leveraging the computing power of the smartphone has hit the market in recent years. With over 1.75 billion smartphones on the planet in 2014, the potential for health monitoring and health-related communication is huge. Headline innovations in smartphone technology include portable ultrasound, HIV and syphilis testing, digital otoscopes and electrocardiograms.

Of these, only a few have been FDA-approved, including Philips’ Lumify ultrasound app and AliveCor’s Kardia mobile ECG. Pitched at hospital emergency departments, the ultrasound app promises to speed up diagnosis. The device has two components: an ultrasound transducer device, which plugs into the smartphone, and software that allows the user to display and share images.

Kardia, targeted at consumers, takes an ECG using a smartphone or Apple watch, and alerts users if atrial fibrillation is detected.

Mobiles are also becoming labs. For instance, in 2015 researchers at Columbia University invented a cheap HIV and syphilis test, powered by a smartphone. The device plugs into the audio jack of a smartphone and processes a small blood sample using an enzyme-linked immunosorbent assay in 15 minutes.

Smartphone health applications are rolling out at an alarming rate. Earlier this year, a software-based method for diagnosing otitis media, by non-clinicians, was revealed by Swedish researchers. To make the diagnosis, a digital otoscope takes images of the eardrum, which are then compared to images on the cloud, with an accuracy of 78.7% (as opposed to 64% to 80% accuracy via traditional visual method).

https://www.youtube.com/watch?v=q_7gNsEtIXw

3. Robotic surgery

Robots have made a grand entrance into operating theatres in recent years, with surgeons using remote-controlled robots to perform prostate cancer surgery, colon surgery and hernia repairs.

The hands-off approach means surgeons can operate at distant locations, while an anaesthetist and on-site surgeon monitor the patient. The first-ever trans-Atlantic operation was performed in 2001 by surgeons in New York operating on a patient in France.

In 2006, a Canadian surgeon performed a mock cholecystectomy on a training model 19 metres below sea level, to show the concept’s potential in treating astronauts on deep space missions.

Telesurgery went mainstream in 2000, with the FDA approving the first robotic system for general laparoscopic surgery. The da Vinci Surgery System operates in hospitals all over the world and was used to perform over 200,000 surgeries in 2012, mainly prostatectomies and hysterectomies.

These systems filter out the surgeon’s tremors and allow several more degrees of rotation than the human hand. This extra manoeuvrability can result in smaller incisions and more precise tumour excision.

While not all types of robotic surgery currently result in superior clinical outcomes, it is likely that at least for some procedures, robots will take over in future. A study published in May reported the case of a robot that performed anastomosis surgery on live pigs more effectively than a human-assisted robot and a human surgeon. To achieve this feat, the research team combined near-infrared fluorescent marking and 3D imaging, creating an image of the tissue.

4. Artificial intelligence

When presented with a scan, the trained eye of a doctor draws on years of experience to detect abnormalities. But a computer can rival this expertise by rapidly comparing an image with millions of others stored in the cloud.

Artificial intelligence is taking off in healthcare, with companies already setting their sights on early detection of tumours, dementia and hypoglycemic episodes. A recent report from consulting company Frost & Sullivan predicts that AI investment in healthcare will reach $6 billion by 2021.

Leading the charge is IBM’s supercomputer, Watson. Hundreds of companies have partnered with Watson Health, paying a monthly fee to access its cognitive systems.

Novo Nordisk and Medtronic aim to use Watson’s capacities to help people with diabetes manage the timing and dosage of insulin injections by analysing readings from continuous blood-sugar monitors. Glucose-sensitive electrodes are inserted under the skin, and connected to a smart phone.

The results of a pilot study of 600 patients released in January showed that Watson predicted hypoglycaemic episodes up to three hours before they occurred, using algorithms based on data from 150 million patient days.

IBM’s research team is also developing a five-minute Q&A test for the early detection of dementia. Watson’s test works by comparing voice recordings of individuals with hundreds of examples, looking at tone, pauses, hesitation and continuity of speech rather than content. A stream of start-ups are moving into AI action in healthcare are not far behind.

https://www.youtube.com/watch?v=ZPXCF5e1_HI

5. Power of the mind

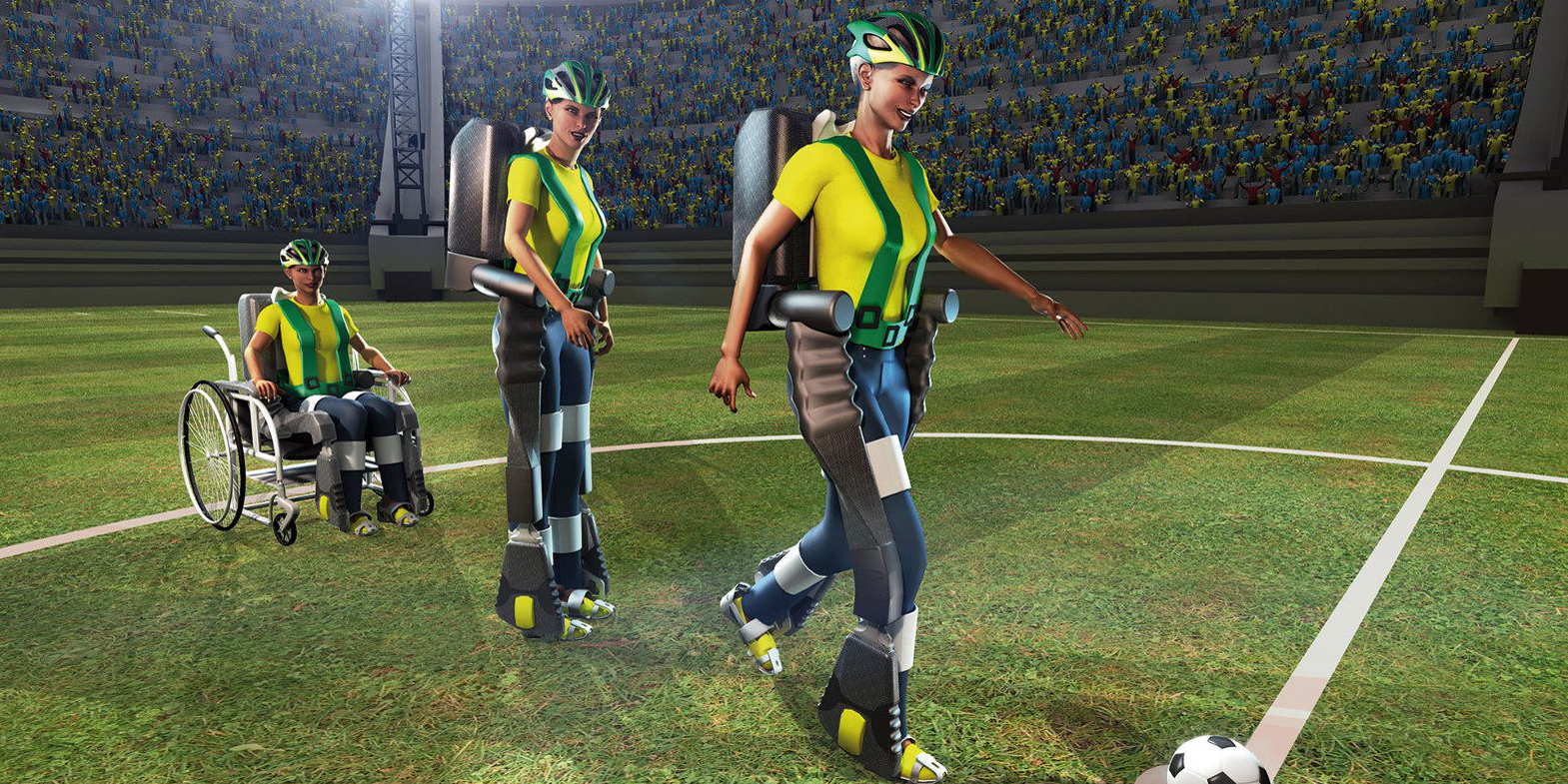

At the 2014 FIFA World Cup, a man with paraplegia made the ceremonial first kick using a mind-controlled exoskeleton. The feat demonstrated just how far brain-computer interfaces have come in realising the dream of restoring function to people with spinal damage.

The hydraulic robotic suit works by reading and decoding brain signals from a sensor worn on the head. Pressure, temperature and speed sensors on the soles of the feet convey information about movement to the arms via vibration.

Thought-controlled prosthetics, although in its early days, also aims to allow movement in people with neuromuscular disorders such as cerebral palsy, stroke, or spinal cord injury.

While many challenges remain in terms of cost, reliability and functionality, great strides have been made in this mindreading technology. In 2011, a woman with hemiplegia following a stroke lifted a cup using a mind-controlled robot arm.

In 2012, a similar robotic arm allowed a person with quadriplegia to perform basic functions, including feeding themselves. Last year, researchers in Germany and Korea revealed an exoskeleton, similar to the body suit on display at FIFA, that facilitates walking by using a brain-computer interface.

https://www.youtube.com/watch?v=hkOwS7nAevo

6. Beyond 3D printing

Printing in three dimensions has proven to be a transformational force in medicine. With additive manufacturing being used to construct custom tracheas, skull fragments, dental implants and spinal replacements – it seems a week doesn’t pass without a new application being reported.

The 3D printing process also opens up new avenues for medication manufacture and drug delivery. For example, last year the anti-epileptic, Spritam, became the first 3D-printed pill to gain FDA approval. With a more porous structure than traditional tablets, Spritam disintegrates in the mouth with just a small sip of liquid, making it easier for those who find it difficult to swallow.

3D printing can also allow medications to be custom-made in terms of dose for each patient, and scientists to play with the shape of drugs, which can affect absorption rates. Researchers at University College London, for instance, are developing 3D-printed drugs in the form of pyramids and doughnuts (a pyramid-shaped pill releases a drug more slowly than a cube or sphere).

But it doesn’t stop there; the hottest new technology is 4D printing. Pioneered by scientists at MIT in the US, this takes 3D printing one step further by creating objects that change their shape over time.

These materials are printed as strings or sheets, which then fold into 3D objects in response to water, heat, light or pressure. “It’s like naturally embedding smartness into the materials,” explains an MIT research scientist.

4D materials are particularly useful in children, who can outgrow implants quickly. In April last year, a US team used 4D printing to construct restorable polycaprolactone airway splints for three infants with tracheobronchomalacia.

7. Greater genomic literacy

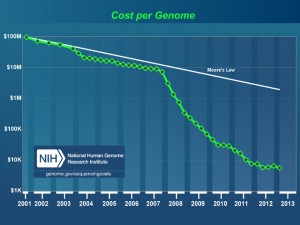

After a slow and expensive start, the 13-year and $3 billion Human Genome Project completed in 2003 has dramatically increased the quality of genetic data publicly available to researchers.

Prior to the project, researchers knew the genetic basis of around 60 disorders. Now, around 5000 conditions have a known genetic component.

In the past decade, pharmaceutical companies have also embraced pharmacogenetics, which can play an important role in identifying responders and non-responders to medications, avoiding adverse events, and optimising drug doses.

While PCR remains the standard technology for genetic testing, routine whole genome sequencing is becoming a reality.

Sometimes called molecular photocopying, and developed in the 1980s, PCR was integral to sequencing the human genome.

But now, with the cost of sequencing a human genome plunging to $US1000 ($1390) in 2014, the era of personalised or precision medicine is much closer.

In another mind-bending twist, scientists are now talking about synthesising the entire human genome from scratch. A group of 150 scientists attended a closed meeting at Harvard Medical School in the US this month to reportedly discuss the possibility of manufacturing human chromosomes. The aim wasn’t to create humans, they said, but to improve the ability to synthesise DNA in general, which could be applied to various animals, plants and microbes.

8. Nanotechnology

Nanomaterials are minuscule particles in the size range of biological molecules, such as proteins and DNA. The transfer of materials into the nanodimension changes their physical properties, and as such, nanoparticles can slip seamlessly in and out of human cells, carrying bioactive compounds to precise locations.

Thus they have a myriad of medical applications, including imaging, drug delivery and cancer treatment. The unique size and properties of nanoparticles help overcome classic problems of drug delivery, such as resistance and solubility. Their large surface area to volume ratio lends itself to coating with antibodies or proteins, which stick to target sites. This can reduce drug side effects, as nanoparticles release active compounds only in specific locations rather than throughout the body.

In Australia, a number of other nanodrugs have been approved by the TGA, including paclitaxel as chemotherapy, and aprepitant for chemotherapy-induced vomiting, and many more are undergoing clinical trials.

“Nanotechnology offers an exquisite sensitivity and precision that is difficult to match with any other technology,” says Professor Sam Gambhir from Stanford University in the US.

It’s not just cancer treatment set to benefit from advances in nanotechnology. Last year, a study in Advanced Functional Materials found that magnetic nanoparticles, loaded with the clot-busting agent tPA, could destroy blood clots 100 to 1000 times faster than conventional methods.

By coating the nanoparticles in albumin, the researchers managed to camouflage the compound, allowing the nanoparticles to reach the clot before being recognised by the immune system. Iron oxide was chosen for the core because the researchers plan to use nanoparticles alongside magnetic resonance imaging.

The next step in nanoparticle engineering is ‘programming’ nanoparticles to perform multiple functions. In the future nanoparticles may be able to target tumours, fluoresce for bioimaging purposes, release drugs following an energy signal such as an ultrasound, or vibrate and produce heat that kills tumour cells.